- What NYC Local Law 144 actually requires (and who it applies to)

- The EU AI Act timeline and what counts as 'high risk' in hiring

- Bias audits: who runs them, what they cost, what they look like

- A 7-step compliance checklist you can run this quarter

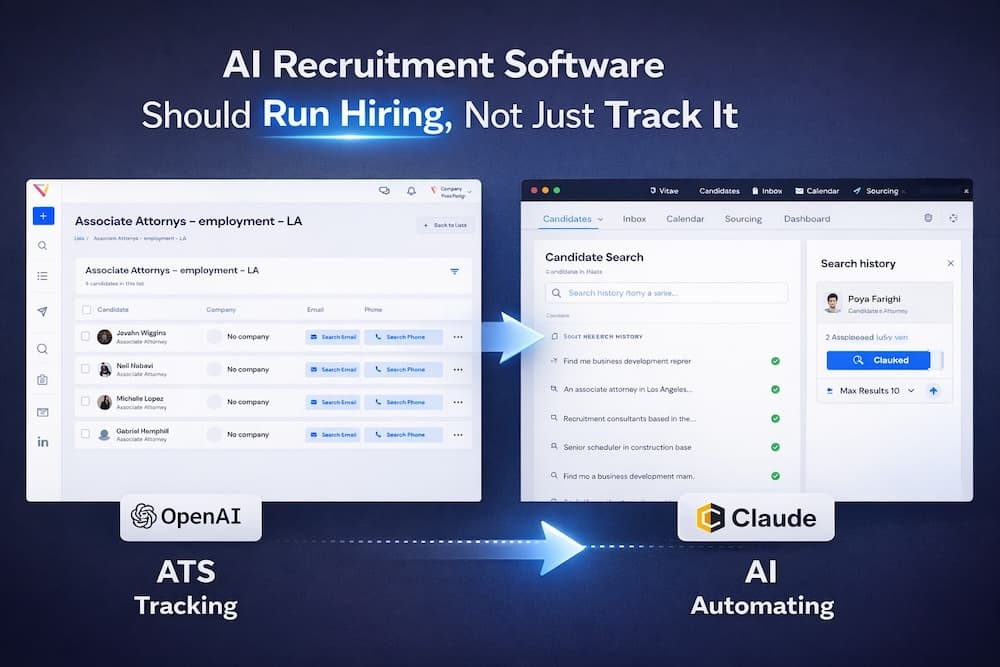

A recruiter using ChatGPT to summarise a call is one thing. A platform using AI to score and rank candidates is a regulated activity in a growing number of jurisdictions. If you do not know the difference, your TA team has a risk you are not pricing in.

This is not a legal opinion. This is the working knowledge a TA leader should have to ask their general counsel the right questions.

NYC Local Law 144 (in effect since 2023)

The first US law specifically targeting AI in hiring. The short version:

- Applies to any employer using an automated employment decision tool (AEDT) for a role that will be performed in NYC.

- Requires an annual independent bias audit of the AEDT.

- Requires public posting of the audit summary.

- Requires advance notice to candidates that an AEDT is being used and the type of data it considers.

- Penalties: $500 for the first violation, up to $1,500 per subsequent violation, per day, per candidate.

The EU AI Act (phased through 2026)

Broader than LL144 and applies to any company hiring in the EU, regardless of where the company is based. The Act categorises AI systems by risk level, and most AI used in hiring falls into the ‘high risk’ bucket.

For a hiring AI system classified as high risk, the Act requires:

- A risk management system documenting how the AI was tested and what it does.

- Data governance proving training data is appropriate and bias-tested.

- Technical documentation a regulator could review.

- Human oversight built into the workflow (no fully autonomous hire/reject decisions).

- Post-market monitoring with logs of every decision.

Penalties are tiered by severity. Worst case: up to 6% of global revenue or 30 million euros, whichever is higher.

Other jurisdictions worth tracking

- Illinois AI Video Interview Act: requires consent before AI analyses video interviews.

- Colorado AI Act (effective 2026): risk-based framework similar to EU.

- Maryland HB 1202: requires consent before facial recognition during interviews.

- Federal US (proposed): the Algorithmic Accountability Act has been re-introduced multiple times. Watch this space.

What a bias audit actually looks like

The audit is a statistical analysis of how the AI scores candidates across protected demographic groups. The auditor looks at ‘impact ratios’: the rate at which one group is selected versus the most-selected group. If the ratio falls below 80% (the ‘four-fifths rule’), the AI is flagged as having disparate impact.

A typical audit costs $5K to $30K depending on scope and auditor. Several specialised firms now do nothing else. Most enterprise AI vendors will provide their own audit summary, which their customers can reference.

The 7-step compliance checklist

- Inventory every AI tool used in hiring. Include the ones recruiters are using on their own (ChatGPT, Claude, etc.).

- Classify each tool by what it does. Drafting? Scoring? Ranking? Decision-making? The legal exposure scales with the latter.

- Identify your jurisdictions. Where are candidates located? Where will they work? Each jurisdiction adds its own rules.

- Get the bias audit summaries from your vendors. If they cannot produce one, that is a flag.

- Update your candidate-facing notice. Most career sites need a paragraph saying AI tools may assist in screening.

- Build human-in-the-loop into every consequential decision. Never let AI auto-reject without a recruiter sign-off.

- Keep logs. Every AI decision should be traceable, attributable, and reviewable.

What this means for vendor selection

Two questions to ask any AI hiring vendor:

- Can you produce a bias audit on demand? If not, why not?

- Are decisions logged and reviewable? Can I see the full audit trail per candidate?

Vendors who cannot answer both confidently are creating risk for you. Vendors who can are doing the work.

The bottom line

Compliance is no longer an enterprise-only concern. The day after your first NYC role goes live, LL144 applies. The day your first EU role goes live, the AI Act applies. The good news: the compliance bar is achievable, the audits are commoditising, and modern AI hiring tools are being built around these requirements from day one.