How to Measure Time-to-Hire Improvements with AI Recruiting Software

A measurement framework for time-to-hire under AI recruiting software, with the early signals, the steady-state benchmarks, and the traps to avoid.

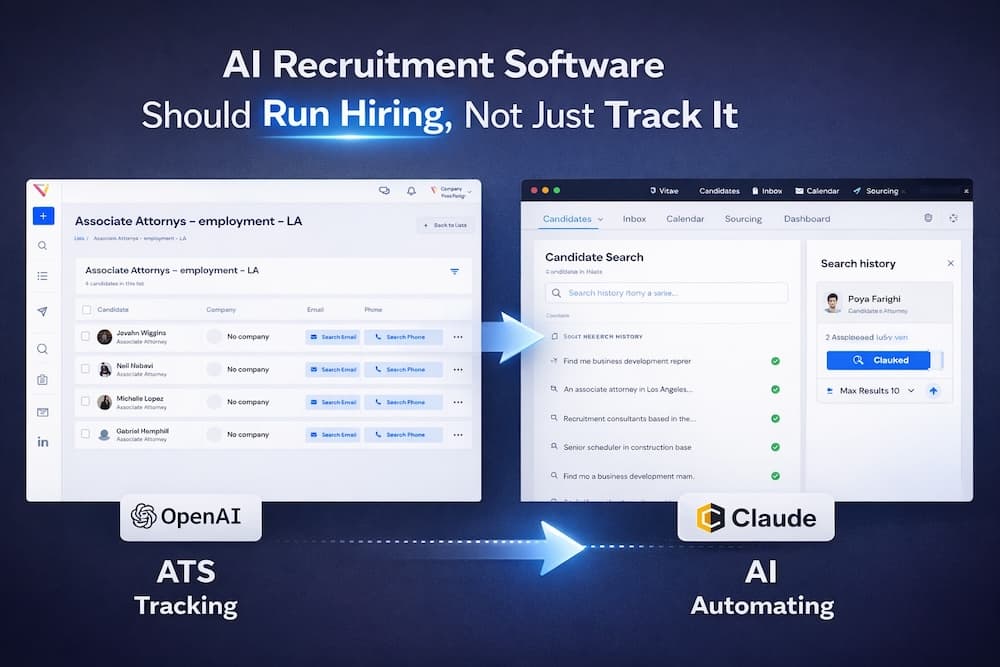

Time-to-hire is the metric most teams look at first and report on most often, and the one that is easiest to fool yourself about. AI recruiting software does compress it, often dramatically, but only if you measure consistently and resist the urge to read the first two weeks of data as the new steady state.

Define the metric before you change anything

Time-to-hire and time-to-fill are not the same number. Time-to-fill is from role open to offer accepted, and is what most operators care about for budget purposes. Time-to-hire is from first candidate contact to offer accepted, and is more useful for measuring recruiter and platform throughput. Pick one, write the definition down, and use the same one before and after AI is in the funnel.

Snapshot the baseline

Before turning AI tools on, capture the numbers you will be comparing against. The minimum useful baseline is 90 days of historical data, broken out by role type. Engineering, sales, and operations roles run on different clocks and AI compression is uneven across them.

- Average time-to-fill, by role family

- Median (not just average) time-to-fill — averages hide outliers

- Pipeline conversion at each stage (apply, screen, first round, offer)

- Source-of-hire breakdown (inbound, sourced, agency, referral)

The 30, 60, 90 day arc

The signal arrives in three phases. Reading any phase as the final answer is the most common measurement mistake.

Day 14: first throughput signal

By the end of week two, you should see sourcing throughput rise visibly. AI search-and-match returns more shortlists per recruiter per day. This is a leading indicator, not a result. Time-to-fill on the first cohort of roles will not be measurable yet.

Day 30 to 60: cohort completion

Around day 30, the first roles started under the new motion begin to close. The cohort is small and noisy. Time-to-fill numbers will look promising but should not be quoted to leadership as steady-state yet.

Day 90: steady-state benchmark

By day 90 you have a meaningful cohort with full-cycle data. The median across 200+ teams using Vitae sees a 43% reduction in median time-to-fill at this point, with the strongest teams pulling 50%+ and the weakest seeing 20%. This is the number to budget against and the one to share externally.

The 90-day number is the real benchmark. Earlier numbers are leading indicators that are easy to misread as results.

Traps to avoid

- Mixing definitions: switching between time-to-hire and time-to-fill mid-measurement makes results uninterpretable

- Cherry-picking role types: AI compresses high-volume roles more than executive roles; report on both

- Ignoring quality: speed without offer-acceptance and 6-month-retention checks creates the wrong incentive

- Reporting averages instead of medians: a single 200-day enterprise role distorts the average

What to report

A useful internal report at day 90 has four numbers: median time-to-fill before and after, by role family; offer-acceptance rate before and after; source-of-hire shift; and tooling spend per recruiter. The combination tells you whether AI compressed the funnel without distorting quality. For the wider context on what to expect, see the full ROI breakdown.