Can AI Recruiting Tools Actually Find Better Candidates Than Humans?

An honest comparison of where AI recruiting tools beat human recruiters on candidate quality, where humans still win, and how the best teams combine both.

The honest answer is yes on coverage and consistency, no on judgement, and the question is anyway the wrong frame. AI recruiting tools and human recruiters are not direct substitutes; they are stages of the same pipeline. The teams that get the best candidate quality use AI to widen the funnel and humans to compress it down to a shortlist that makes the offer.

Where AI consistently wins on quality

Coverage

A human recruiter can reasonably review 30 to 60 candidate profiles for a single role before fatigue degrades judgement. AI search-and-match ranks the entire active pool against the brief: tens of thousands of profiles in seconds. The candidate who would have been on page 14 of search results gets seen. Coverage is the biggest single quality win.

Consistency

Two recruiters scoring the same candidate often disagree on fit. AI applies the same rubric to every profile, every time. The score may not always be right, but it is always consistent, which makes it auditable and improvable. Human inconsistency is invisible until you measure it.

Time-of-day fatigue

Recruiter judgement degrades after the third hour of profile review. AI does not get tired. For high-volume sourcing this is a meaningful quality lift; the candidate reviewed at 4pm gets the same attention as the one reviewed at 9am.

Where humans still win, decisively

Senior and executive judgement

Beyond a certain seniority, candidate quality is shaped by signal that does not appear in profiles: how someone made trade-offs in their last role, why they left, what their reputation is in their industry. Those are recruiter conversations, not AI calculations. AI accelerates the work around the senior recruiter; it does not replace them.

Cultural and team-fit calls

AI can summarise communication style and surface red flags at scale, but it cannot read the room of a hiring panel. Final fit calls remain human, and should.

Edge cases on background

Career-changers, returning parents, and candidates with unusual paths are the cases where rigid scoring is most likely to underrate strong fits. Recruiter override on these candidates is critical, which is why AI scoring should always be a recommendation a human can move, not a gate that blocks the funnel.

AI does coverage and consistency at a scale humans cannot match. Humans do judgement at a depth AI cannot match. The win comes from combining both, not picking a side.

What “better quality” actually means

Quality is not one number. The right way to measure is across four metrics, before and after AI is in the funnel.

- Offer-acceptance rate: are the shortlisted candidates the ones who actually accept

- First-90-day retention: do the hired candidates stay through onboarding

- Hiring-manager NPS: are HMs more or less satisfied with the shortlists they get

- Pipeline diversity: is the funnel widening or narrowing on under-represented groups

Customer data on Vitae shows offer-acceptance rises 8 to 15% on AI-shortlisted candidates compared with manual sourcing for the same roles. Retention is roughly unchanged or marginally better. Hiring-manager NPS rises noticeably because shortlists arrive faster and consistently match the brief.

The combination that beats either alone

The winning pattern: AI scores the entire pool against the brief, surfaces the top tier with explanations, and the recruiter reviews and re-ranks the top 30 with their own judgement before the hiring manager sees a shortlist. This costs less recruiter time per role and produces better candidates than either pure-human or pure-AI workflows.

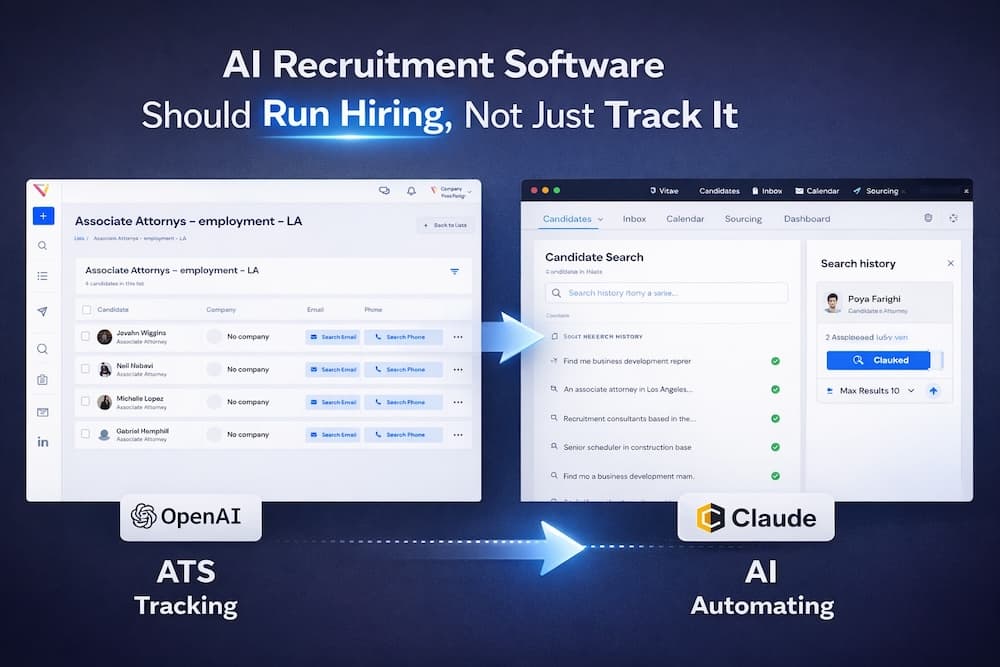

For the deeper architecture behind this, see how AI-native recruiting differs from a traditional ATS. For accuracy specifics on the screening side, read how accurate AI resume screening actually is.