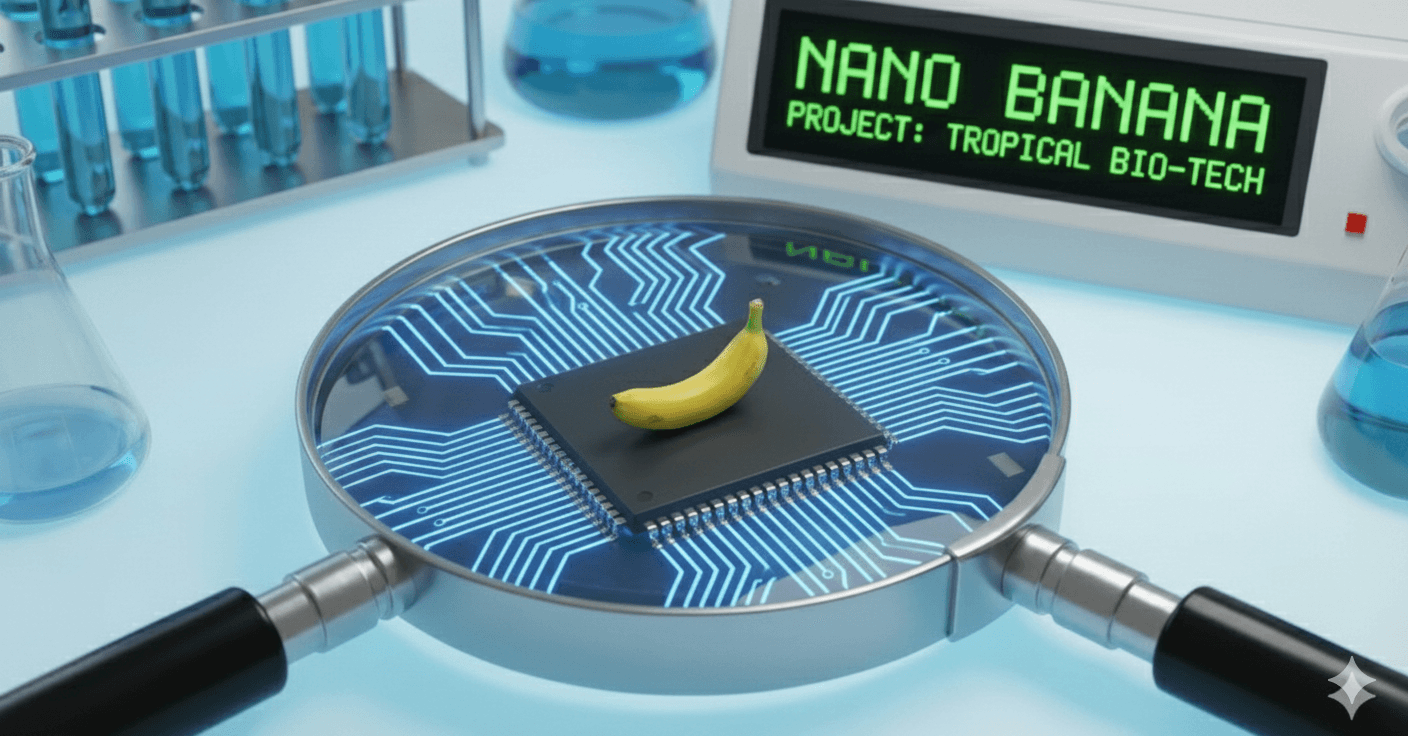

Nano Banana: The Image Model Redefining Visual AI Inside Google Gemini

An overview of Google's Nano Banana image model inside Gemini, why it matters for content production, and how it compares to other image models.

Google's Nano Banana image model, embedded inside Gemini, has emerged quietly as one of the most capable image-generation tools in 2025. It is not the loudest model in the category. It might be the best.

What makes it different

Three things. First, the prompt fidelity is markedly higher than competitors at producing images that match nuanced text prompts. Second, the editing flow inside Gemini lets you iterate on an image conversationally, with natural-language modifications applied to the same canvas. Third, the cost-per-image is meaningfully lower than rival flagship models.

Where it fits

Marketing and content teams are the first beneficiaries. The conversational edit loop dramatically shortens the cycle from idea to publish-ready image. Designers do not lose their jobs. They get a faster sketching partner.

The unlock is not generating an image once. It is generating an image, then asking it to be slightly different, ten times in a row.

How it compares

- Versus Midjourney: similar quality on aesthetic prompts, better on conversational editing

- Versus DALL-E: better text rendering, faster iteration

- Versus Stable Diffusion variants: less customization, more polish out of the box

What it signals

Image generation is now a feature, not a category. Embedding the model inside a chat interface, rather than shipping a separate dedicated app, will be the dominant pattern going forward. Nano Banana is the clearest example of that pattern executed well.